How to improve your AGENTS.md file ?

Insights from the First Empirical Study on Context Files for Coding Agents

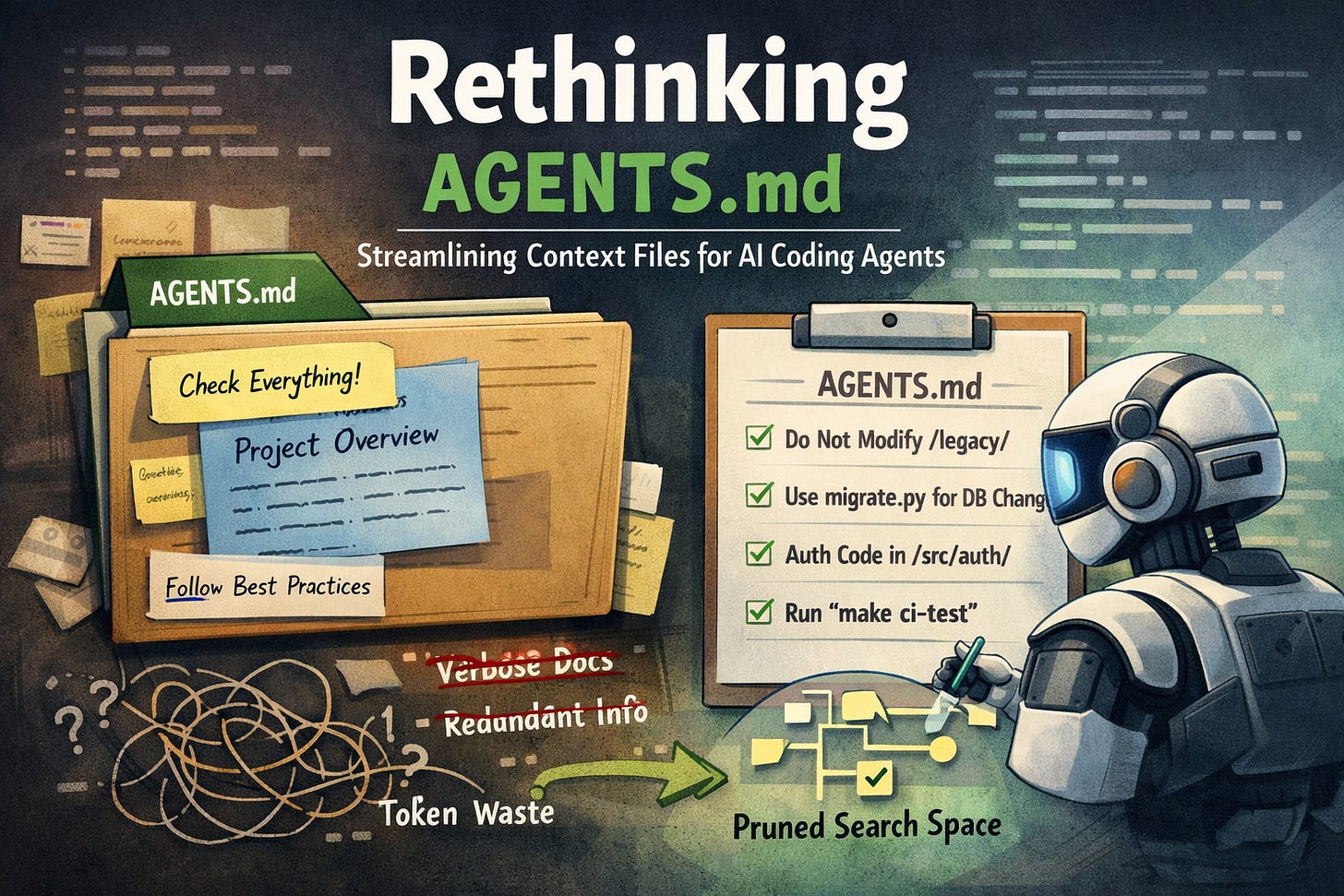

The growing adoption of repository-level context files such as AGENTS.md or CLAUDE.md reflects a broader intuition in AI-assisted software engineering: that providing structured, high-level information about a codebase should improve the performance of coding agents. The logic appears straightforward. If an agent understands architectural constraints, directory structure, and workflow conventions in advance, it should be able to navigate the repository more efficiently and produce higher-quality modifications.

However, the recent paper “Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?” (arXiv:2602.11988) offers a first careful empirical evaluation of this assumption.

Its findings suggest that the relationship between added context and improved performance is far more delicate than commonly believed.

What the paper actually tested

The authors evaluate coding agents under three controlled conditions:

No repository-level context file.

An LLM-generated

AGENTS.mdcreated using recommended prompting practices.A developer-written

AGENTS.mddrawn from real repositories.

The evaluation spans two complementary benchmarks:

SWE-bench Lite (a standardized bug-fixing benchmark).

A newly constructed benchmark AgentBench consisting of 138 real GitHub issues from repositories that already include

AGENTS.mdfiles.

The study measures not only task success rates but also inference cost (token usage) and behavioral characteristics such as file exploration patterns and reasoning depth.

This design allows the authors to separate intuition from measurable performance.

The main findings

The results are instructive:

LLM-generated context files frequently reduce task success rates relative to the no-context baseline.

Developer-written context files provide, at best, modest improvements.

Both types of context files increase inference cost by approximately 20%.

Importantly, the performance degradation observed with LLM-generated files does not stem from agents ignoring them. On the contrary, agents actively process and follow the instructions provided. The difficulty arises because additional instructions often expand the agent’s search space rather than constraining it.

In effect, the added context increases computational effort without proportionally reducing uncertainty about the solution.

Why additional context can degrade performance

The mechanisms identified in the paper are conceptually straightforward but practically significant.

1. Redundant information

When AGENTS.md reiterates architectural details, directory descriptions, or conventions that are already evident from the repository structure, the agent must still allocate tokens to process this information. Unlike human readers, large language models do not selectively skim redundant prose. Every token consumes attention and contributes to context length.

If the repeated information does not reduce uncertainty about the task, it simply adds computational overhead.

2. Overly broad procedural guidance

Many context files include general advice such as “explore the repository thoroughly,” “run comprehensive tests,” or “respect architectural boundaries.” While reasonable for human developers, such guidance can increase the effective branching factor of an agent’s search process. Agents tend to operationalize these instructions literally, opening more files, running more test cycles, and expanding reasoning trajectories even when the task is localized.

The result of verbosity is greater computational effort without guaranteed improvement in solution quality.

3. Context as competing resource

Language models operate within finite context windows and practical token budgets. Every token devoted to meta-information about the repository is a token not devoted to reasoning about the specific issue at hand. The study suggests that context files must therefore be evaluated not only in terms of informational content but also in terms of opportunity cost.

Use tokens only for information that is unique and meaningfully reduces uncertainty.

When context files actually help

The paper does not argue that repository-level context is inherently detrimental. Rather, it demonstrates that context is beneficial primarily when it contains information that cannot be cheaply inferred from the code itself.

In experiments where repository documentation was reduced or removed, context files became more helpful. This finding is conceptually important. It indicates that context is most valuable when it compensates for missing structural or operational signals.

The decisive criterion, therefore, is not volume or repetition but informational leverage.

How to improve AGENTS.md in practice

The empirical findings suggest a principled approach to writing repository-level context files.

1. Eliminate inferable information

If a fact can be derived quickly from:

directory structure,

naming conventions,

inline documentation,

type annotations,

or configuration files,

then it does not belong in AGENTS.md.

The file should not duplicate the README in condensed form, nor should it provide architectural summaries that are already explicit in the codebase.

Redundancy increases cost without increasing clarity.

2. Encode non-derivable constraints

High-value content includes constraints that are:

implicit but not structurally encoded,

operational rather than descriptive,

capable of invalidating entire classes of solutions.

Examples include:

“Files under

/legacy/must not be modified.”“Database schema changes must be performed exclusively through

migrate.py.”“Authentication state must never be written directly; use the event dispatcher in

events.py.”“Integration tests are executed only via

make ci-test; plainpytestdoes not trigger them.”

Each of these statements meaningfully reduces the search space.

3. Replace general advice with explicit boundaries

Broad guidance encourages exploration; boundaries constrain it.

Instead of stating that the agent should “explore the repository thoroughly,” specify which directories are relevant to particular subsystems. Instead of advising respect for architecture, define concrete interfaces that must not be bypassed.

The objective is not to encourage diligence but to reduce ambiguity.

4. Optimize for search space reduction

A useful mental model is to treat AGENTS.md as a search space pruning mechanism. Its function is to:

narrow candidate files,

identify forbidden modification zones,

clarify hidden couplings,

specify non-obvious operational requirements.

If a sentence does not reduce the branching factor of the solution space, it is unlikely to justify its token cost.

5. Constrain length deliberately

Given the observed ~20% increase in inference cost, brevity is not aesthetic minimalism but computational prudence. An effective AGENTS.md should be substantially shorter than onboarding documentation for a human developer.

The

AGENTS.mdshould read as a list of high-impact constraints rather than a narrative overview.

A minimal, research-aligned AGENTS.md example

# AGENTS.md

## Scope Constraints

- Do NOT modify files under /legacy/.

- Public API contracts in /api/ must remain backward compatible.

- Database schema changes must go through migrate.py only.

## Relevant Locations

- Authentication logic: src/core/auth/

- Authorization rules: src/core/permissions.py

- Billing state transitions: src/billing/state_machine.py

## Non-Obvious Couplings

- User role changes require cache invalidation via cache_roles().

- Billing status updates emit events through events.py; direct state mutation is invalid.

## Test Execution

- Run full test suite with: make ci-test

- Plain `pytest` does NOT execute integration tests.Conceptual takeaway

The broader lesson of the paper extends beyond AGENTS.md. It concerns context engineering more generally.

In LLM-based systems, information is not neutral. Every additional token competes for limited attention and computational budget. Context is beneficial only insofar as it reduces uncertainty more than it increases processing cost.

For repository-level context files, this implies a shift in perspective. They should not function as condensed documentation. Instead, they should encode decision-critical constraints that cannot be inferred from static analysis.

In that sense, the quality of an AGENTS.md file is not measured by its completeness, but by its ability to eliminate entire categories of incorrect reasoning paths.

Your experiences

I would genuinely love to hear your perspective. If you have experimented with refining your AGENTS.md or other repository-level context files, please share what changes you made, what worked, and what did not! In particular, I am curious whether tightening constraints, reducing redundancy, or restructuring information had measurable effects on agent performance or cost. Your practical experiences and observations would add valuable depth to this discussion.

Enjoy the read!